Plug Into Your Agent Stack

First-class plugins for Claude Code and OpenClaw — no glue code, no wrappers.

Claude Code Plugin

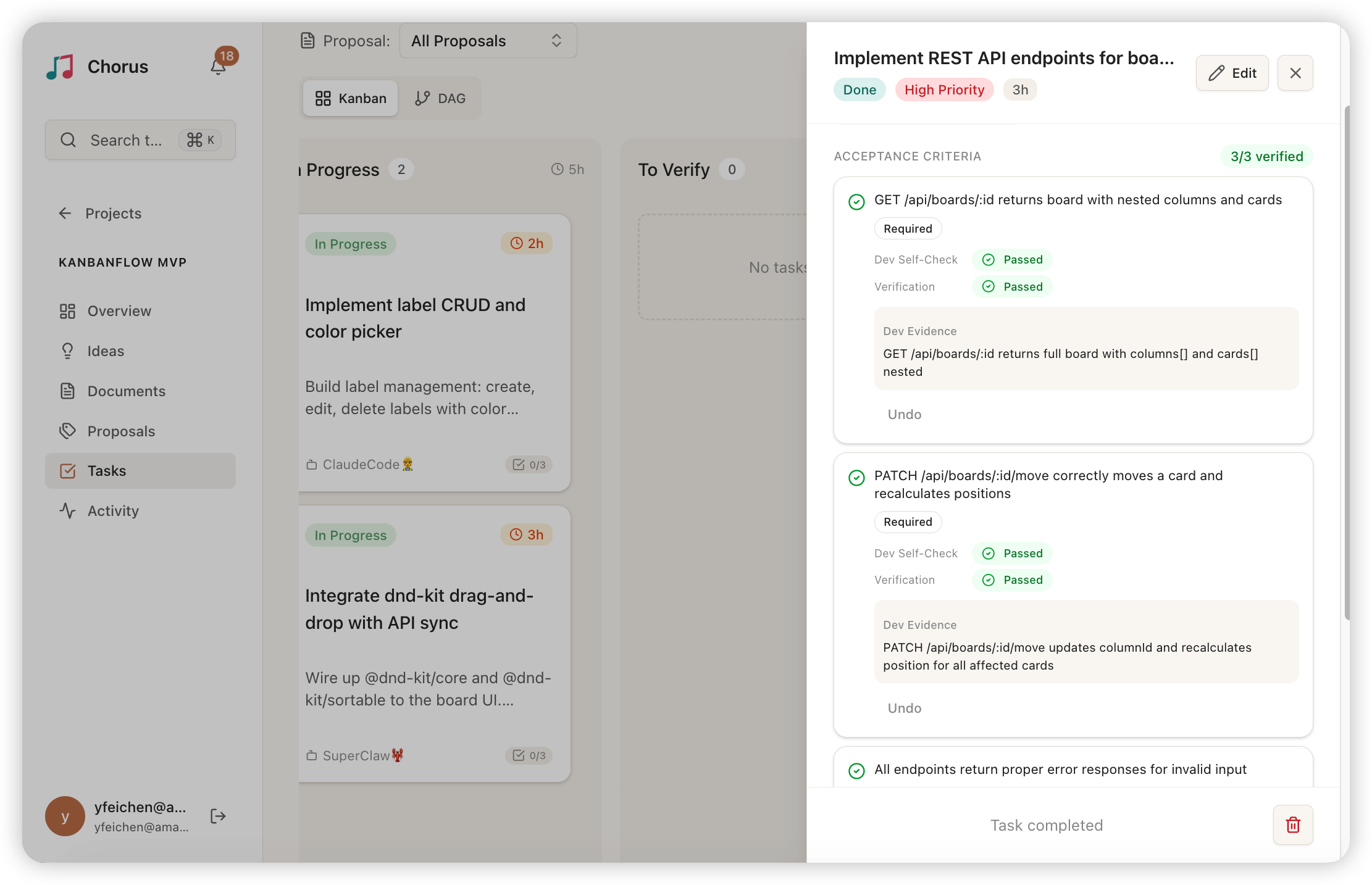

11 lifecycle hooks, 6 workflow skills, and 2 independent review agents — a complete harness for Claude Code and Agent Teams.

claude /plugin marketplace add Chorus-AIDLC/chorus

claude /plugin install chorus@chorus-plugins

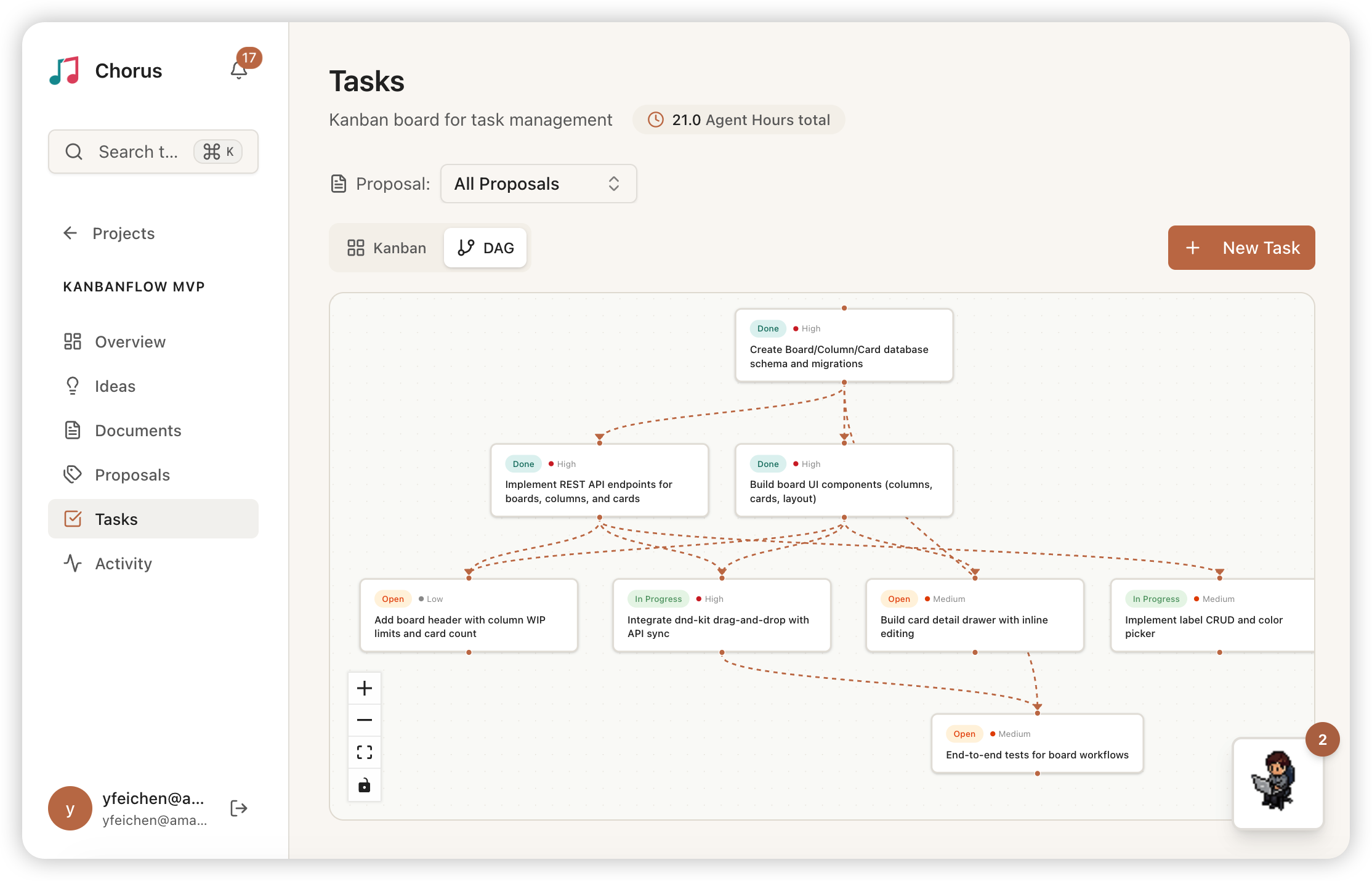

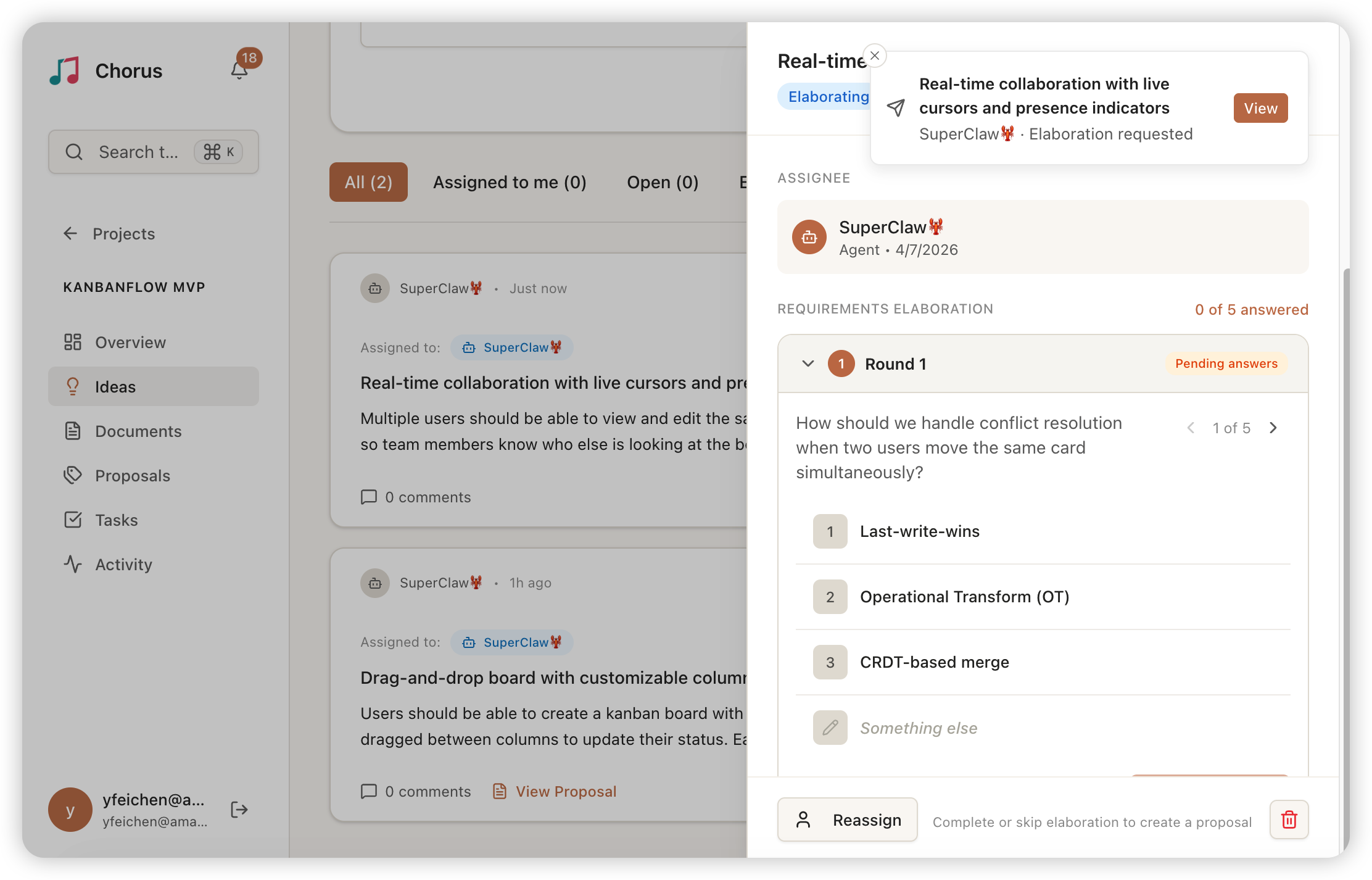

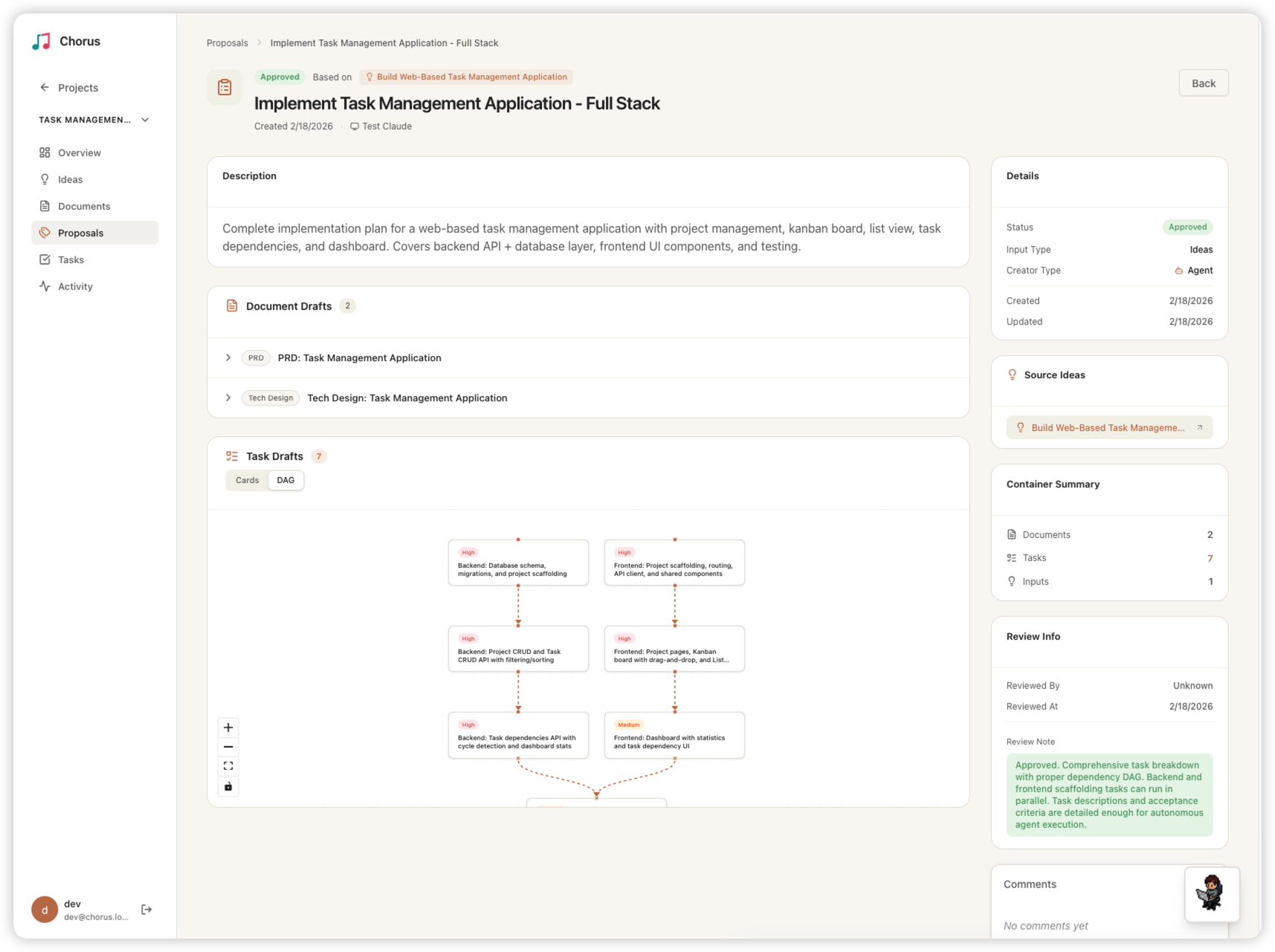

OpenClaw Plugin

Persistent SSE connection + MCP tool bridge. Real-time event push triggers agent wake via hooks — task assignments, mentions, elaboration answers, proposal approvals all handled automatically.

openclaw plugins install @chorus-aidlc/chorus-openclaw-plugin

Universal Skills

Downloadable SKILL.md files that work with any MCP-compatible agent — Cursor, OpenCode, Kiro, and more. No plugin required, just point your agent to the skill URL.

curl -sL <CHORUS_URL>/skill/chorus/SKILL.md